- ImageCLEF 2024

- LifeCLEF 2024

- ImageCLEF 2023

- LifeCLEF 2023

- ImageCLEF 2022

- LifeCLEF2022

- ImageCLEF 2021

- LifeCLEF 2021

- ImageCLEF 2020

- LifeCLEF 2020

- ImageCLEF 2019

- LifeCLEF 2019

- ImageCLEF 2018

- LifeCLEF 2018

- ImageCLEF 2017

- LifeCLEF2017

- ImageCLEF 2016

- LifeCLEF 2016

- ImageCLEF 2015

- LifeCLEF 2015

- ImageCLEF 2014

- LifeCLEF 2014

- ImageCLEF 2013

- ImageCLEF 2012

- ImageCLEF 2011

- ImageCLEF 2010

- ImageCLEF 2009

- ImageCLEF 2008

- ImageCLEF 2007

- ImageCLEF 2006

- ImageCLEF 2005

- ImageCLEF 2004

- ImageCLEF 2003

- Publications

- Old resources

You are here

LifeCLEF 2019

Results Publication

The overview paper summarizing the results of all LifeCLEF 2018 challenges is available: Springer version - author version (pdf)

Individual working notes of tasks organizers and participants can be found within CLEF 2019 CEUR-WS proceedings (in "LifeCLEF" section).

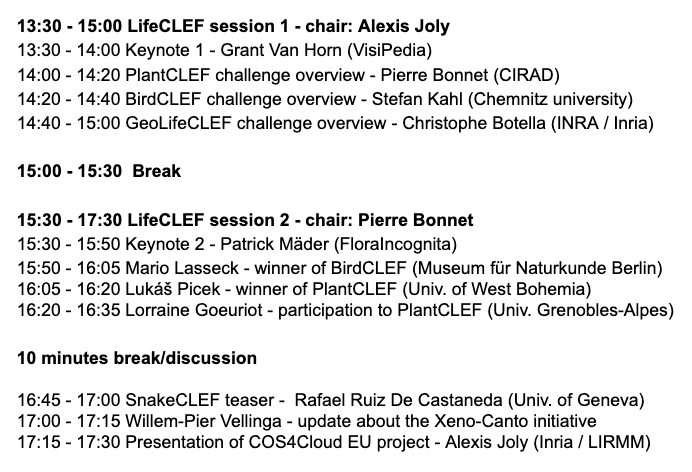

LifeCLEF 2019 Workshop Program - Tuesday, 10th Sept (in the context of CLEF conference)

Schedule

- Dec 2018: registration opens for all LifeCLEF challenges

- Jan 2019: training data release

- March 2019: test data release

- until May 8 (depending on the challenges): deadline for submission of runs by the participants

- 13th of May 2019: release of processed results by the task organizers (online)

- 24th of May 2019: deadline for submission of working notes papers by the participants

- 28th of June 2019: camera ready working notes papers of participants and organizers

- 9th-12th Sept 2019: CLEF 2019 Lugano, Switzerland

Motivation

Building accurate knowledge of the identity, the geographic distribution and the evolution of living species is essential for a sustainable development of humanity as well as for biodiversity conservation. Unfortunately, such basic information is often only partially available for professional stakeholders, teachers, scientists and citizens, and often incomplete for ecosystems that possess the highest diversity. A noticeable cause and consequence of this sparse knowledge is that identifying living plants or animals is usually impossible for the general public, and often a difficult task for professionals, such as farmers, fish farmers or foresters and even also for the naturalists and specialists themselves. This taxonomic impediment was actually identified as one of the main ecological challenges to be solved during Rio’s United Nations Conference in 1992. In this context, an ultimate ambition is to set up innovative information systems relying on the automated identification and understanding of living organisms as a mean to engage massive crowds of observers and boost the production of biodiversity and agro-biodiversity data.

The LifeCLEF 2019 lab proposes three data-oriented challenges related to this vision, in the continuity of the previous editions of the lab.

CLEF Conference and working notes

LifeCLEF lab is part of the Conference and Labs of the Evaluation Forum: CLEF 2018. CLEF 2018 consists of independent peer-reviewed workshops on a broad range of challenges in the fields of multilingual and multimodal information access evaluation, and a set of benchmarking activities carried in various labs designed to test different aspects of mono and cross-language Information retrieval systems. More details about the conference can be found here. Also there is more information about the Clef Initiative.

Submitting a working note with the full description of the methods used in each run is mandatory. Any run that could not be reproduced thanks to its description in the working notes might be removed from the official publication of the results. Working notes are published within CEUR-WS proceedings, resulting in an assignment of an individual DOI (URN) and an indexing by many bibliography systems including DBLP. According to the CEUR-WS policies, a light review of the working notes will be conducted by LifeCLEF organizing committee to ensure quality. As an illustration, LifeCLEF 2018 working notes (task overviews and participant working notes) can be found within CLEF 2018 CEUR-WS proceedings.

Registration and data access

- Each participant has to register on (https://www.crowdai.org) with username, email and password. A representative team name should be used

as username. -

In order to be compliant with the CLEF requirements, participants also have to fill in the following additional fields on their profile:

- First name

- Last name

- Affiliation

- Address

- City

- Country

-

Once set up, participants will have access to the dataset tab on the challenge's page. A LifeCLEF participant will be considered as registered for a task as soon as he/she has downloaded a file of the task's dataset via the dataset tab of the challenge. The precise links to each challenge will be provided here when available.

Registrations are handled one a per-task basis. This means if a task has multiple challenges (subtasks), a participant can automatically access the data of all challenges in that task. There is one dataset per task, meaning we do not separate datasets on a per-challenge basis.

Contact

Coordination

- Alexis Joly, INRIA Sophia-Antipolis - ZENITH team, LIRMM, University of Montpellier, France, alexis.joly(replace-by-an-arrobe)inria.fr

- Henning Müller, University of Applied Sciences Western Switzerland in Sierre, Switzerland, henning.mueller(replace-by-an-arrobe)hevs.ch

GeoLifeCLEF

- Christophe Botella, Inra – AMAP, Montpellier, France, Christophe Botella

- Alexis Joly, INRIA Sophia-Antipolis - ZENITH team, LIRMM, University of Montpellier, France, alexis.joly(replace-by-an-arrobe)inria.fr

- Maximilien Servajean, LIRMM – UM3, Montpellier, France, Maximilien.Servajean(replace-by-an-arrobe)lirmm.fr>

BirdCLEF

- Stefan Kahl, Chemnitz University of Technology, Germany, stefan.kahl(replace-by-an-arrobe)informatik.tu-chemnitz.de>

- Willem-Pier Vellinga, Xeno-Canto foundation for nature sounds, The Netherlands, wp(replace-by-an-arrobe)xeno-canto.org

- Hervé Glotin, University of Toulon, France, glotin(replace-by-an-arrobe)univ-tln.fr

- Hervé Goëau, Cirad - AMAP, Montpellie, France.

- Alexis Joly, INRIA Sophia-Antipolis - ZENITH team, LIRMM, University of Montpellier, France, alexis.joly(replace-by-an-arrobe)inria.fr

PlantCLEF

- Hervé Goëau, Cirad - AMAP, Montpellier, France.

- Alexis Joly, INRIA Sophia-Antipolis - ZENITH team, LIRMM, University of Montpellier, France, alexis.joly(replace-by-an-arrobe)inria.fr

- Pierre Bonnet, Cirad – AMAP, Montpellier, France, pierre.bonnet(replace-by-an-arrobe)cirad.fr

Credits

- Ivan Eggel, University of Applied Sciences Western Switzerland, Sierre, Switzerland, ivan.eggel(replace-by-an-arrobe)hevs.ch